The Agent Has to Prove It

What I picked up inside a 'Shipping with Codex' event with Notion and OpenAI

read time 3 minutes

There was a moment last Tuesday night where a Notion engineer dragged a task across a kanban board and something clicked for me.

The task moved into a column called “ready for agent.” A coding agent picked it up, read the spec, gathered context, started building, verified its own work, generated documentation explaining what it did and why, and handed everything back for a human to review. Accept or reject.

It was a familiar workflow, but performed by an agent.

I was at an evening called Shipping with Codex, co-hosted by Notion and OpenAI at Notion’s office in SoHo. Engineers from both teams showing how they build with coding agents. I was invited by Notion’s marketing team. I’ve been building enterprise agentic workflows at Adobe as well as personal agents for myself, so the excitement was high.

The Notion engineering lead, Varun, opened with a line that I wrote down immediately.

The agent’s job is not done until it has proven to you that it did the right thing.

Not just done the right thing, but proven it, to you - the human.

That distinction changes how you design everything. If the agent just writes good code but can’t show you what it changed and why, sure you’re building faster, but you’re also outsourcing risk, while keeping the accountability.

So Varun’s entire workflow is built around proof. Agents verify their own work through a spectrum of tools before a human ever sees it. And when the human does see it, they don’t just get code, they get artifacts. Documentation, interactive demos, a conversation thread where they can interrogate the agent’s reasoning. In one example, the agent generated a lightweight HTML prototype of the PR, complete with edge cases you could click through and quizzes to test your own understanding.

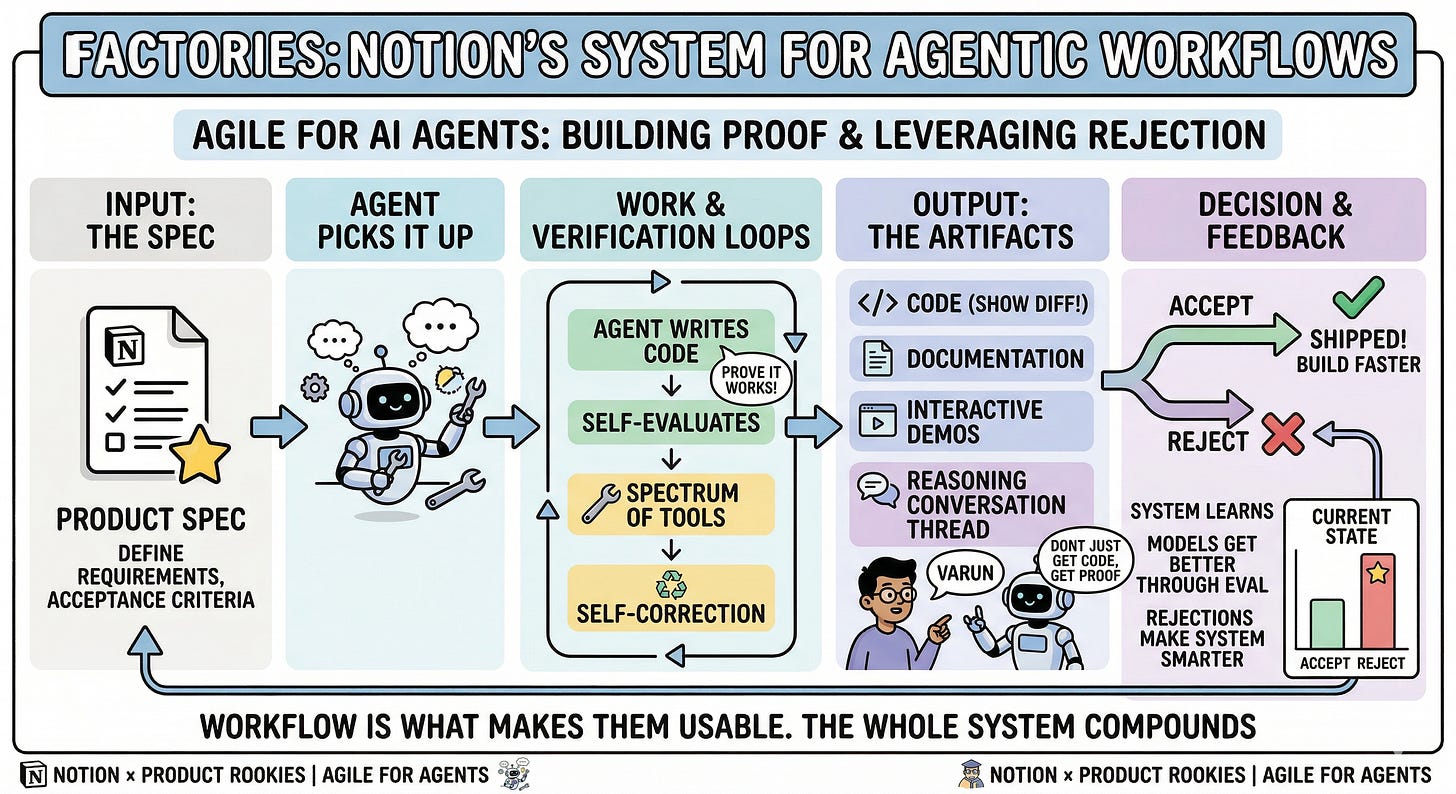

The system Notion built around this, which they call Factories, is basically agile for agentic workflows:

That’s the full loop: the spec is the input, proof is built in as a step, and the human stays at the decision point. Accept or reject.

The reject column was still longer than the accept column - Varun was honest about that. But every rejection sharpens the specs, improves the skills, and teaches the system what the team actually expects. The models will get better, workflow is what makes them usable.

Here’s where my brain went -

I’ve vibe-coded apps on a Saturday afternoon and written about how fun that is. It is fun. The creative energy of going from idea to deployed in an hour is something I think everyone should experience and keep exploring.

But there’s a gap between that and shipping something at scale to actual users. Something with stakes - where the code needs to be understood, reviewed, and trusted by other people or even by future you.

What I saw in that room was the bridge, and it’s a workflow any builder can adopt, whether you’re shipping to millions of users or building something for yourself that you want to actually maintain.

I keep coming back to why this felt so significant to me, and I think it connects to something I care about beyond product or engineering.

We’re in a moment where the dominant narrative around AI is about removing friction, removing steps, removing humans. Speed as the measure of everything.

But what I noticed was an engineer who had deliberately added steps. Added proof, explainability, and structure. The result was a system that was both faster and more trustworthy.

This feels like a pattern worth paying attention to; even beyond software, in how we think about any system where humans and machines need to work together.

The agent has to prove it, and we have to build the system that demands the proof.

— Akash